One of the accusations most commonly levelled against Nate Silver and his enterprise is that probabilistic predictions are unfalsifiable. “He never said the Democrats would win the House. He only said there was an 85% chance. So if they don’t win, he has an out.” This is true only if we focus on the top-level prediction, and ignore all the smaller predictions that went into it. (Except in the trivial sense that you can’t say it’s impossible that a fair coin just happened to come up heads 20 times in a row.)

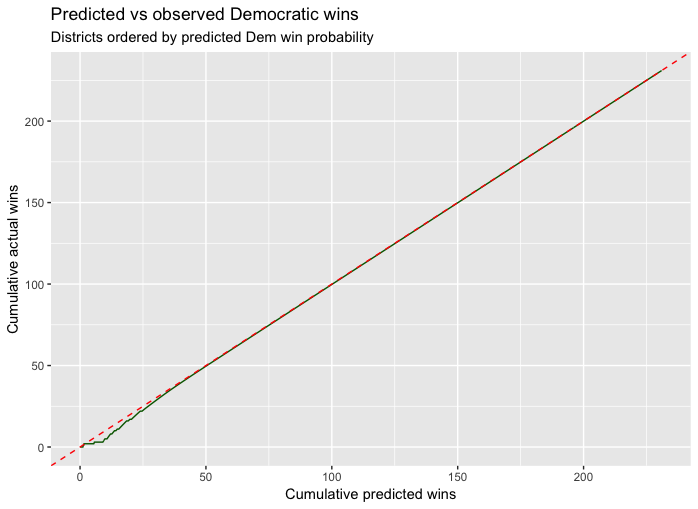

So, since Silver can be tested, I thought I should see how 538’s predictions stood up in the 2018 US House election. I took their predictions of the probability of victory for a Democratic candidate in all 435 congressional districts (I used their “Deluxe” prediction) from the morning of 6 November. (I should perhaps note here that one third of the districts had estimates of 0 (31 districts) or 1 (113 districts), so a victory for the wrong candidate in any one of these districts would have been a black mark for the model.) I ordered the districts by the predicted probability, to compute the cumulative predicted number of seats, starting from the smallest. I plot them against the cumulative actual number of seats won, taking the current leader for the winner in the 11 districts where there is no definite decision yet.

The predicted number of seats won by Democrats was 231.4, impressively close to the actual 231 won. But that’s not the standard we are judging them by, and in this plot (and the ones to follow) I have normalised the predicted and observed totals to be the same. I’m looking at the cumulative fractions of a seat contributed by each district. If the predicted probabilities are accurate, we would expect the plot (in green) to lie very close to the line with slope 1 (dashed red). It certainly does look close, but the scale doesn’t make it easy to see the differences. So here is the plot of the prediction error, the difference between the red dashed line and the green curve, against the cumulative prediction:

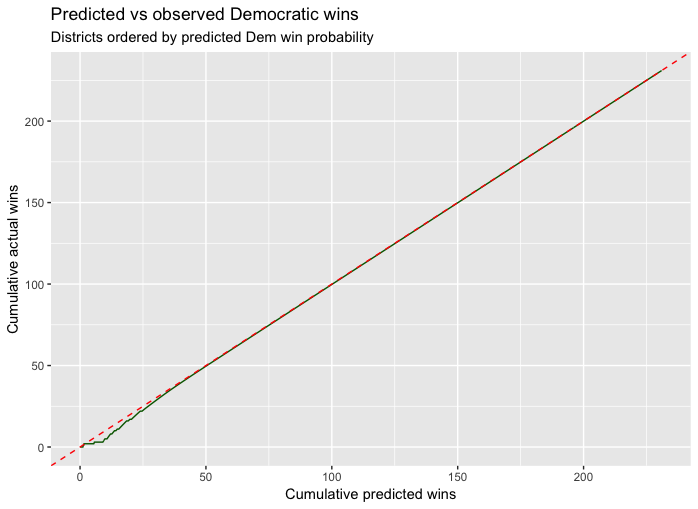

There certainly seems to have been some overestimation of Democratic chances at the low end, leading to a maximum cumulative overprediction of about 6 (which comes at district 155, that is, the 155th most Republican district). It’s not obvious whether these differences are worse than you would expect. So in the next plot we make two comparisons. The red curve replaces the true outcomes with simulated outcomes, where we assume the 538 probabilities are exactly right. This is the best case scenario. (We only plot it out to 100 cumulative seats, because the action is all at the low end. The last 150 districts have essentially no randomness. The red curve and the green curve look very similar (except for the direction; the direction of the error is random). The most extreme error in the simulated election result is a bit more than 5.

What would the curve look like if Silver had cheated, by trying to make his predictions all look less certain, to give himself an out when they go wrong? We imagine an alternative psephologist, call him Nate Tarnished, who has access to the exact true probabilities for Democrats to win each district, but who hedges his bets by reporting a probability closer to 1/2. (As an example, we take the cumulative beta(1/2,1/2) distribution function. this leaves 0, 1/2, and 1 unchanged, but .001 would get pushed up to .02, .05 is pushed up to .14, and .2 becomes .3. Similarly, .999 becomes .98 and .8 drops to .7. Not huge changes, but enough to create more wiggle room after the fact.

In this case, we would expect to accumulate much more excess cumulative predicted probability on the left side. And this is exactly what we illustrate with the blue curve, where the error repeatedly rises nearly to 10, before slowly declining to 0.

I’d say the performance of the 538 models in this election was impressive. A better test would be to look at the predicted vote shares in all 435 districts. This would require that I manually enter all of the results, since they don’t seem to be available to download. Perhaps I’ll do that some day.